p

e

r

s

o

n

a

l |

Reverse emulating the NES!

(31 May 2018 at 18:30) |

Oops, I screwed up by forgetting that May doesn't have 32 days. Especially silly since I finished the content of this post days ago, and all I had to do was post it! Backdated 24 hours [cheating].

I finished the project I've been talking about for a few posts, and uploaded two videos:

Reverse emulating the NES to give it SUPER POWERS!

Making of "Reverse emulating the NES..."

The first video is the project itself, a weird self-explanatory joke, and the second one is a longer explanation of some of the technical stuff and the process that I went through to create it. Of course, up to you, but I think both have something to offer for the audience that reads Tom 7 Radar. :)

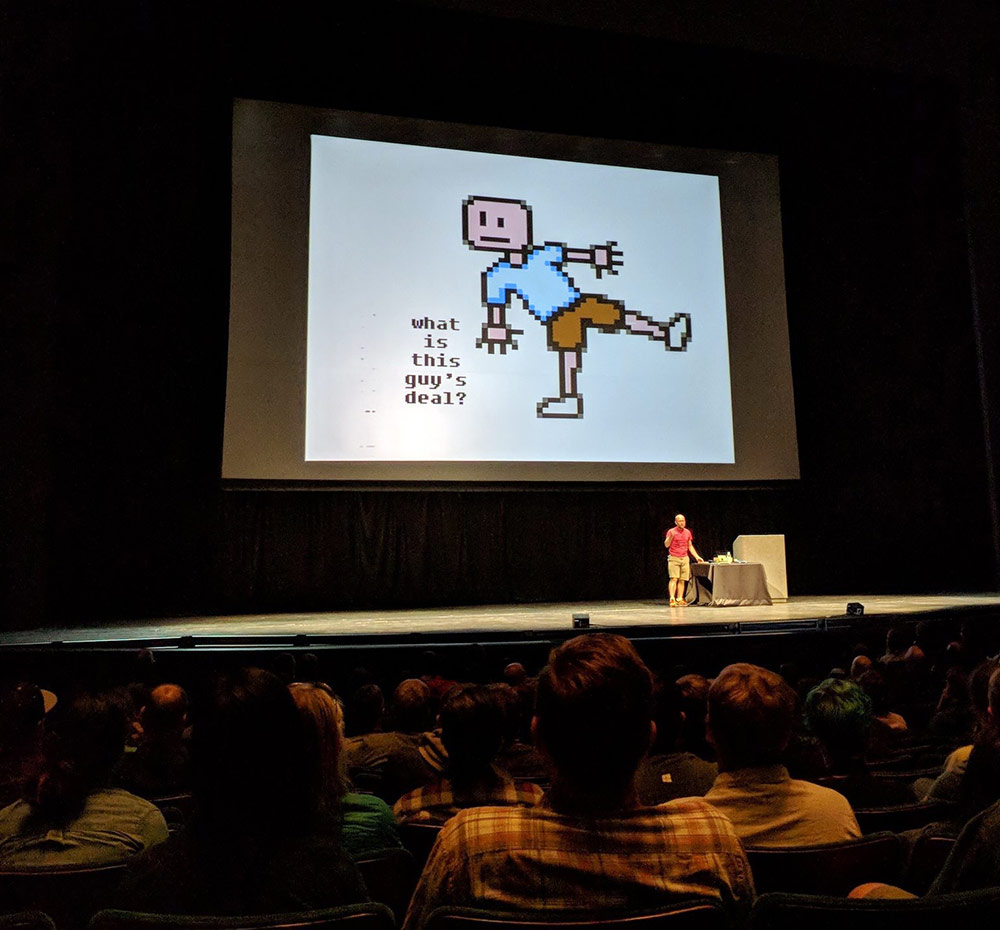

I went to Seattle to present basically the first talk to two different audiences (first at Deconstruct and then at UW's PoCSci, which is like their version of SIGBOVIK.) I was delighted to have the privilege to do both of these without so much as telling anybody involved the title of my talk, let alone anything about its context or e.g. weird equipment, which allowed me to do a more sneak-attack "reveal." This was very fun. Here's me on the big stage. The screen is 40' diagonal, so these pixels are about 1.88 square inches each!

Tom 7 at Deconstruct

It was a fun project but pretty hectic. Now I am aggressively relaxing by cleaning my basement and playing some video games (Steamworld Dig 2; great so far!). I have some ideas for the next thing, but also a bunch of travel coming up, so I'm taking it easy. |

|

|

This is a great project.

What I don't understand though is what makes the addresses the PPU reads so hard to predict on your side? Doesn't it basically read the attributes and tiles all in the same deterministic sequence in all frames? I mean, perhaps the scrolling can nudge the sequence around a bit, but I still don't understand why it matters so much that it's difficult for the reverse emulator to read the addresses from the bus.

Also, as for the sound, could you reuse some parts of the Tasbot project demonstrated on AGDQ 2017? In that one, they also produced sound on unmodified NES by feeding it data from a fast computer. You'll still have to figure out how to generate the CPU input, but perhaps you can use their software to figure out what the CPU should tell to the sound chip. |

| Kings Road |

Predicting the PPU is not that hard, but I don't have that many spare cycles to do it. The main challenges are:

- Address reads are noisy, but each address only tells you a little about where rendering is on the screen (nametable/attribute reads are nice and deterministic, but don't tell you the scanline; pattern table reads depend on the tile returned by the nametable, but do include low-order bits of the scanline).

- The PPU does some irregular stuff during "hblank" to read sprites and to prep the next scanline (I don't handle this and I think it's the main reason the left column of the screen is kinda messed up)

- You want each code path to be similar in length, to reduce timing jitter

Ultimately what I did here was pretty simple, but it's also delicate wrt timing, and went through several iterations (some were fancier and worked worse).

For sound: I need to watch this tasbot video since several people have mentioned it! I think making sound is actually pretty straightforward; for me it was just a matter of running out of time (for the project before my talks) and time (within a frame to do significant computation). |

| Amazing |

Hey Tom,

Is your Deconstruct talk available on YouTube or elsewhere? I’d like to see it if possible.

Thanks,

Tristan |

Hello,

they do not exist diagrams or skematic?

|

Tristan: I think Deconstruct is planning on uploading all of the talks, so you should eventually be able to see it. But I haven't heard anything about them being available yet. They'll probably be on the web page. My talk was very similar to the Youtube video (with the YouTube video probably being a little better since I could edit it down a little and redo sections), so if you've watched that already, you got the gist. :)

bazza: There are some schematics in the source repository (for kicad):

sourceforge.net/p/tom7misc/svn/HEAD/tree/trunk/ppuppy/pcb/

I'm not offering any support for these, though! (: |

| It turns out that Steve Chamberlin from BMOW also had the idea of having a modern computer reply to logic signals in real time as if it was hardware, and he failed: "https://www.bigmessowires.com/2018/06/26/cortex-m4-interrupt-speed-test/" . |

| And here's someone successfully driving the NES in a similar way: "https://www.youtube.com/watch?v=FzVN9kIUNxw" |

Dear Tom 7,

I know that this is kind of silly, but how hard would it be to reverse emulate NES games from a different region? Specifically, there is a NES game that was only released in the PAL region, and it will not run properly on an NTSC NES (this has to do with 3D rendering and the difference between processor timing in the two regions).

Again, I know it is silly, and reverse engineering the 6502 assembly code is probably easier, but how hard would it be to skip the dithering and all that and get the raspberry pi to emulate a PAL NES? |

| AFAIK there would be no reason that wouldn't work (as long as running at 6/5 speed or some frame-dropping/telecine would be ok), although the emulator would have to support the game. Sounds like it may have some fancy mapper if it's doing 3D rendering, so that's not a given! Probably much simpler to play on an emulator on the computer though :) |

| It is easier to run on an emulator for sure, and the mapper is in fact unique. The "NTSC" version does not work well on NTSC hardware (despite there being a publicly available "NTSC" version of the ROM) the real NTSC prototype version was never released and the current owner of the EPROM is not willing to sell because they still hope to make a legit working copy. Here is a link to the "NTSC"(in name only, it really needs a PAL emulator to be fully playable) version. www.elitehomepage.org/nes/index.htm |

| I just found a video that mentions your reverse emulating the NES project: "https://youtu.be/dD37VRCf8eQ?t=948" |

| And here's yet another project where someone builds a custom Game Boy cartridge that behaves differently from normal cartridges. The power here can hardly be called a superpower, a contemporary manufacturer who made cartridges could probably have done it with the hardware they had available. https://www.youtube.com/watch?v=ix5yZm4fwFQ |

| Any chance to release the code so we can build this ourselves? |

All the source code is here (with schematics, etc.):

https://sourceforge.net/p/tom7misc/svn/HEAD/tree/trunk/ppuppy/

This is not something you can just download and run, but it could be a useful reference if you want to do a similar project yourself. |

|

|

|